Epigraph

Praise belongs to God, Lord and Sustainer of the Worlds. (Al Quran 1:2)

God keeps the heavens and earth from vanishing; if they did vanish, no one else could stop them. God is most forbearing, most forgiving. (Al Quran 35:41)

Promoted posts: The universe as code: a cosmological reckoning with the simulation hypothesis and Videos: Are We Living in a Simulation? Could God be Running the Simulation?

Presented by Zia H Shah MD

Audio teaser: Is our universe a computer simulation?

Abstract

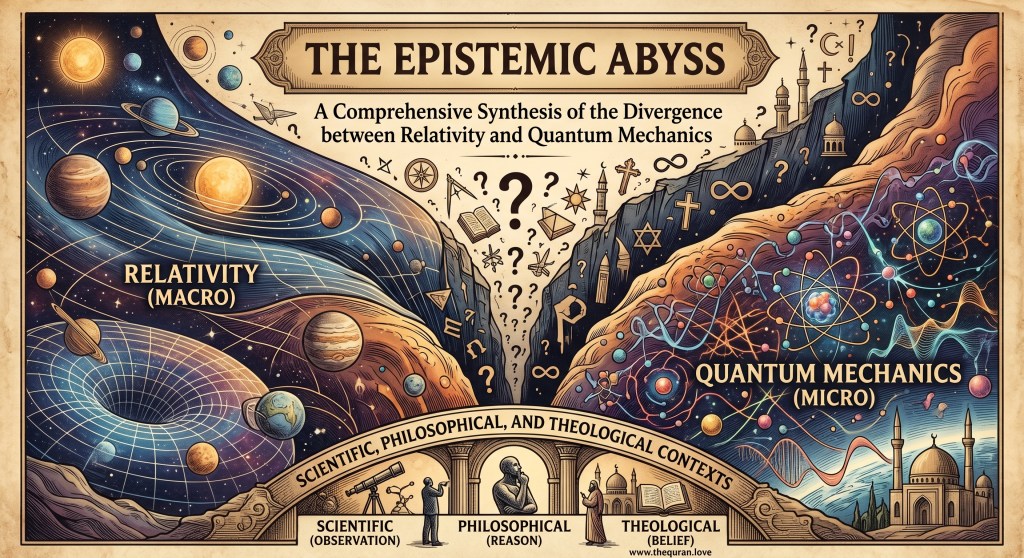

The simulation hypothesis posits that what is perceived as physical reality—including the cosmos, the laws of physics, and the phenomena of human consciousness—is an artificial construct generated by a high-fidelity computer simulation. This proposition represents a convergence of theoretical physics, information theory, and analytical philosophy, suggesting that the universe is fundamentally informational rather than material. Central to the contemporary discourse is the simulation argument formulated by Nick Bostrom, which utilizes a probabilistic trilemma to suggest that if posthuman civilizations ever develop the capacity to run high-fidelity “ancestor simulations,” the number of simulated observers would exponentially exceed biological ones, making it statistically probable that we reside in such a construct. Cosmological evidence cited by proponents includes the quantization of space-time at the Planck scale, the observer effect in quantum mechanics, and the mathematical precision of physical constants, which are interpreted as computational optimizations or “rendering” constraints. This report examines the technical foundations of digital physics, provides extensive biographies and philosophical insights from primary proponents such as Nick Bostrom, Elon Musk, and Rizwan Virk, and explores the theological implications of a “Programmer God.” Furthermore, it addresses rigorous scientific critiques regarding energy constraints and “hidden complexity,” concluding that while currently untestable, the hypothesis serves as a profound heuristic for understanding the relationship between information, intelligence, and the fabric of the universe.

The Ontological Transition from Materialism to Information

The conceptual underpinnings of the simulation hypothesis represent a radical shift in the ontological status of the universe. For centuries, the prevailing scientific paradigm was one of physicalism, which asserted that the universe is composed of fundamental particles and forces existing within a continuous space-time continuum. However, the 20th and 21st centuries have seen the emergence of “digital physics,” a framework suggesting that the universe is essentially a system of information processing. This transition is summarized by the “it from bit” doctrine proposed by physicist John Archibald Wheeler, which suggests that every physical entity derives its functional properties from the answers to binary yes/no questions.

The history of this idea is deeply intertwined with the development of automata theory and cellular automata. In the late 1960s, computer pioneer Konrad Zuse proposed that the universe itself functions as a massive, discrete computer. This “pancomputationalist” view suggests that the laws of nature are not merely described by mathematics but are mathematical algorithms being executed on a cosmic substrate. Within this framework, the simulation hypothesis is not a flight of science fiction fancy but a logical extension of the trend toward universal computation.

This shift has profound implications for how humanity understands the “base” of reality. If the universe is informational, then the material objects perceived by the senses are secondary manifestations of an underlying code. This leads to the “substrate independence” thesis: the idea that conscious experience does not require biological neurons but can emerge from any sufficiently complex informational system, whether carbon-based or silicon-based.

The Formal Framework: Nick Bostrom’s Simulation Argument

The contemporary resurgence of interest in simulated reality is largely credited to the philosopher Nick Bostrom. His 2003 paper, “Are You Living in a Computer Simulation?”, transformed a speculative thought experiment into a rigorous probabilistic analysis. Bostrom’s argument does not explicitly claim that humanity resides in a simulation; rather, it forces a choice between three distinct possibilities, suggesting that if we reject the first two, the third becomes a statistical certainty.

The Probabilistic Trilemma

Bostrom’s trilemma is formulated through a mathematical model that calculates the fraction of all observers with human-like experiences who are actually simulated. He defines several variables: fp as the fraction of all human-level civilizations that reach a posthuman stage; Nˉ as the average number of ancestor simulations run by such a civilization; and Hˉ as the average number of individuals in a pre-posthuman civilization. The resulting probability P that an observer is simulated is represented by the formula:

fsim=(fp⋅Nˉ⋅Hˉ)+Hˉfp⋅Nˉ⋅Hˉ

Bostrom argues that one of the following three propositions must be true:

| Proposition | Description | Philosophical Implication |

|---|---|---|

| Proposition 1 | The human species is very likely to go extinct before reaching a “posthuman” stage. | This suggests a “Great Filter” or technological ceiling that prevents the creation of high-fidelity simulations. |

| Proposition 2 | Any posthuman civilization is extremely unlikely to run a significant number of simulations of their evolutionary history. | This implies a “strong convergence” toward simulation abstinence due to ethical, legal, or aesthetic reasons. |

| Proposition 3 | We are almost certainly living in a computer simulation. | If the first two are false, the number of “Sims” will vastly outnumber biological “originals,” making our presence in base reality unlikely. |

Bostrom maintains that we currently have no strong empirical evidence to choose which proposition is correct, but he suggests that unless we are currently in a simulation, our descendants will likely never run them. This creates an anthropic link between our current existence and the technological trajectory of the future.

Assumptions of Functionalism and Computation

The validity of the trilemma rests on two primary pillars: the technological feasibility of simulating a universe and the philosophical validity of simulated consciousness. Bostrom cites work by technologists suggesting that a posthuman civilization could build computers capable of 1042 operations per second. Such a machine, powered by a Dyson sphere, could simulate the entire mental history of the human race by allocating approximately 1033 to 1036 operations per human life.

Philosophically, Bostrom adopts “functionalism,” the view that mental states are defined by their causal roles and informational processing rather than their biological substrate. If a computer program can replicate the functional organization of the human brain to a high enough degree of fidelity, it follows that the program would be conscious. This leads to the conclusion that a “simulated person” would be indistinguishable from a “real person” in terms of subjective experience.

Cosmological Evidence and Digital Signatures

Proponents of the simulation hypothesis argue that the universe exhibits several characteristics that are remarkably similar to those of a programmed environment. These features, often called “digital signatures,” suggest that the laws of physics may be optimized for computational efficiency.

Quantum Indeterminacy and Rendering Constraints

One of the most frequently cited pieces of evidence is the “observer effect” in quantum mechanics. In a high-fidelity video game, the engine does not render the entire game world at once; instead, it only renders the portions that are currently being observed by the player to save processing power. Proponents like Rizwan Virk argue that quantum wave-function collapse serves a similar purpose.

Before a measurement is made, a particle exists in a superposition of states—a mathematical probability rather than a physical certainty. It is only when an interaction occurs that the “code” is resolved into a specific value. This optimization technique, known as “rendering on demand,” prevents the universe from having to track the exact state of every atom at all times, which would be computationally prohibitive. Furthermore, quantum entanglement—the instantaneous connection between distant particles—is viewed as a signature of a system where coordinates are just variables in a global database, bypassing the need for physical proximity in three-dimensional space.

The Discretization of Space-Time

In a digital simulation, there is always a minimum resolution, or “pixel size.” In our universe, the Planck scale appears to provide exactly such a limit. The Planck length (≈1.6×10−35 meters) and Planck time (≈5.4×10−44 seconds) are the scales at which the concept of smooth, continuous space-time breaks down.

| Physical Constant | Value | Interpretation as Simulation Parameter |

|---|---|---|

| Planck Length | 1.616×10−35 m | The minimum “grid” or spatial resolution of the simulated manifold. |

| Planck Time | 5.391×10−44 s | The “refresh rate” or clock speed of the cosmic processor. |

| Speed of Light | 299,792,458 m/s | The maximum processing speed for information transfer between cells in the grid. |

Proponents argue that the existence of these absolute scales is a direct result of the universe being a discrete calculation. If space were truly continuous, it would require infinite information to describe even a small volume, leading to a “computational catastrophe”. By pixelating space-time, the simulator creates a finite and computable reality.

The Speed of Light as a Processing Bound

The fact that nothing can travel faster than the speed of light (c) is often interpreted as a “hard cap” on the information processing speed of the underlying hardware. In any network or computer system, there is a maximum speed at which data can propagate. If the universe were “real” and infinite, there would be no a priori reason for a universal speed limit; however, in a simulation, such a limit is necessary to ensure that different parts of the simulation remain synchronized and that the “clock rate” is maintained globally.

Primary Proponents and Philosophical Biographies

The simulation hypothesis has attracted support from a diverse array of intellectuals, each providing a unique perspective based on their expertise in philosophy, technology, or astrophysics.

Nick Bostrom: The Analytical Foundations

Biography: Nick Bostrom was born in 1973 in Helsingborg, Sweden. He holds degrees in philosophy, mathematics, logic, and artificial intelligence from the University of Gothenburg and a PhD in philosophy from the London School of Economics. As a professor at Oxford University and the director of the Future of Humanity Institute, he has become a leading voice on existential risks and the ethical implications of future technologies. Bostrom is widely considered the father of the modern simulation argument, moving it from the realm of science fiction into analytical philosophy.

Core Quote: “The Simulation Argument is perhaps the first interesting argument for the existence of a Creator in 2000 years.” (Note: Bostrom often attributes this sentiment to others while acknowledging the theological parallels of his work).

Bostrom’s contribution is primarily the “trilemma,” which provides a logical structure that forces the respondent to justify their belief in base reality. He emphasizes that the argument is “metaphysical” rather than “skeptical,” meaning it makes a claim about the actual nature of the world rather than simply suggesting we cannot know it.

Elon Musk: The Technologist’s Extrapolation

Biography: Elon Musk, born in 1971 in South Africa, is the founder and CEO of SpaceX, Tesla, and Neuralink. A graduate of the University of Pennsylvania with degrees in physics and economics, Musk’s worldview is heavily influenced by “first principles” thinking. His companies are dedicated to pushing the boundaries of what is technologically possible, from colonizing Mars to merging the human brain with AI.

Core Quote: “There’s a one in billions chance we are in base reality. Forty years ago we had Pong—two rectangles and a dot. Now 40 years later, we have photorealistic, 3D simulations with millions of people playing simultaneously… if you assume any rate of improvement at all, then the games will become indistinguishable from reality.”

Musk popularized the hypothesis in a 2016 interview, using the “Pong” analogy to illustrate the exponential growth of computing power. His argument is that because humanity is on a trajectory to create indistinguishable simulations, the likelihood that we are the first civilization to do so—rather than one of millions already created—is infinitesimally small.

Neil deGrasse Tyson: The Cosmological Perspective

Biography: Neil deGrasse Tyson is an American astrophysicist and science communicator who serves as the Frederick P. Rose Director of the Hayden Planetarium. Educated at Harvard, UT Austin, and Columbia, he has spent his career making complex astrophysical concepts accessible to the public. Tyson often uses his platform to discuss the philosophical implications of modern physics, including the “Hubris of Human Intelligence”.

Core Quote: “I think the likelihood may be very high… the day we learn that it is true I will be the only one in the room saying I’m not surprised.”

Tyson’s support for the hypothesis is rooted in the “intelligence gap.” He argues that humans share 98% of their DNA with chimpanzees, yet chimps cannot do trigonometry. If a species existed that was 1% “above” us, their toddlers might be able to calculate astrophysics in their heads. To such a species, simulating our entire history would be a trivial task for entertainment or research.

Rizwan Virk: The Video Game Framework

Biography: Rizwan Virk is an MIT-educated computer scientist, venture capitalist, and video game developer. He is the founder of Play Labs @ MIT and the author of The Simulation Hypothesis. Virk’s background in building actual simulation engines allows him to draw direct parallels between physics and game development.

Core Quote: “The only mechanism we know of that allows us to run the universe multiple times would be a simulated universe… information gets rendered for us on demand.”

Virk proposed two primary versions of the hypothesis: the “RPG version,” where we are players inhabiting avatars from outside the system, and the “NPC version,” where we are entirely AI constructs. He also developed a “Road to the Simulation Point,” a roadmap of 13 stages that a civilization must pass through to reach the ability to simulate reality.

| Stage | Technology Milestone | Current Status |

|---|---|---|

| Stage 1 | Text-based Adventure Games | Achieved (1970s). |

| Stage 3 | MMORPGs (World of Warcraft) | Achieved (2000s). |

| Stage 5 | Photorealistic VR & AR | In Progress. |

| Stage 7 | Brain-Computer Interfaces | Early Research (Neuralink). |

| Stage 9 | Human-level AI / NPC’s | Rapid Advancement. |

| Stage 13 | The Simulation Point | Estimated 50-100 years. |

Hans Moravec: The Robotics Visionary

Biography: Hans Moravec is a professor at Carnegie Mellon’s Robotics Institute and a pioneer in mobile robot navigation and computer vision. He is famous for “Moravec’s Paradox,” which states that while high-level reasoning is computationally “cheap,” low-level sensorimotor skills are “expensive”. Moravec has long argued that biological evolution is merely a precursor to a “mind fire” of rapidly expanding machine intelligence.

Core Quote: “The robots will re-create us any number of times, whereas the original version of our world exists, at most, only once. Therefore, statistically speaking, it’s much more likely we’re living in a vast simulation.”

Moravec’s biography as a robot philosopher leads him to believe that superintelligent machines will eventually be able to reconstruct the past down to the atomic level. He posits that we are likely the results of such reconstruction, living in a “SimCity” designed by our descendants to preserve their heritage.

Konrad Zuse: The Pioneer of Digital Physics

Biography: Konrad Zuse (1910–1995) was a German civil engineer who independently developed the first functional programmable computer, the Z3, in 1941. Working in isolation during World War II, he pioneered binary arithmetic and high-level programming. In his later years, he turned his attention to the relationship between computation and physics.

Core Quote: “The universe is being computed.” (The fundamental thesis of Rechnender Raum).

Zuse’s 1969 book, Calculating Space, was the first to formalize the idea of “Digital Physics”. He argued that space is not continuous but composed of discrete cells that change state according to the rules of a cellular automaton. This intellectual foundation paved the way for modern simulation theory by suggesting that physical laws are essentially algorithms.

Advanced Civilization vs. Divine Creator: The Nature of the Simulator

A central debate within the simulation hypothesis is the identity and nature of the entity running the simulation. This discussion bifurcates into two primary scenarios: a “Naturalist Simulator” (an advanced civilization) or a “Supernatural Simulator” (God).

The Programmer as a Posthuman Civilization

In the “Naturalist” scenario, the simulators are our own descendants or an extraterrestrial species that has reached the “posthuman” stage. These beings exist within their own “base reality” and run simulations for specific purposes:

- Ancestor Simulations: To study their own history or the evolution of their species.

- Scientific Experimentation: To test different physical constants or social models.

- Entertainment: A high-tech version of a video game or an immersive movie.

In this view, the programmer is “God-like” but not a “God” in the traditional sense. They are finite beings who may be subject to the laws of their own universe and could potentially be part of a “nested” simulation themselves. This leads to the “infinite regress” problem, where every simulator is being simulated by a higher-level entity.

The Programmer as a Divine Creator

Conversely, some philosophers and theologians argue that the simulation hypothesis is essentially a modern, technoscientific version of classical theism. If the programmer created our universe, is omniscient regarding its state, and can intervene at will (effectively performing miracles by changing the code), then they meet the functional definition of God.

| Attribute | Religious Concept | Simulation Equivalent |

|---|---|---|

| Creation | Genesis / The Big Bang | Initializing the simulation. |

| Omniscience | God sees all hearts/minds | The simulator has access to every memory address. |

| Omnipotence | Miracles / Divine Will | Administrative overrides of physical variables. |

| Judgment | Afterlife / Karma | Evaluation of simulation logs or “soul” uploading. |

| Illusion | Maya / Veils of Reality | The rendered virtual world. |

Christian philosopher James Anderson distinguishes between different levels of this “simulation-god” relationship. In “Scenario 1: The Sims,” we are purely fictional characters with no independent existence. In “Scenario 2: The Matrix,” we exist independently (as biological bodies) but our senses are manipulated. “Scenario 3: Brains in a Computer” proposes that we are conscious programs running on a physical machine in the simulator’s world. Each of these scenarios carries different implications for the “Problem of Evil.” If the programmer is benevolent, why did they code suffering into the simulation? Some proponents suggest “Aesthetic Theodicy,” where suffering is necessary for the simulation to be “interesting” or to produce complex data.

Scientific Critiques and Technical Barriers

Despite its popularity, the simulation hypothesis faces rigorous critiques from the physics community, primarily focusing on the staggering computational resources required and the “unnecessary” complexity of nature.

The Problem of “Hidden Complexity”

Physicist Frank Wilczek, a Nobel laureate, argues that the universe does not look like a “good” simulation. A programmer looking for efficiency would not waste resources simulating the internal mechanisms of a proton or the trillions of unobserved galaxies in the deep universe. Wilczek points out that the “hidden complexity” of the subatomic world and the vastness of the cosmos are largely irrelevant to human-level experience.

If the goal were simply to simulate human consciousness, much simpler, “low-res” physics could have been used. The fact that the universe remains consistent and complex even at the smallest and largest scales suggests it is a primary physical reality rather than a targeted simulation.

Thermodynamic and Energy Limits

The “Information is Physical” principle, derived from Landauer’s Principle and the Bekenstein Bound, provides a strict limit on the energy required for computation. To simulate even a small portion of the universe at full resolution (the holographic limit), a computer would need to consume an impossible amount of energy.

| Target for Simulation | Required Energy/Power | Comparison/Context |

|---|---|---|

| Visible Universe (Full Res) | 1013 Milky Way Masses | The total rest-mass energy required to “initialize” the data. |

| Earth (Holographic Limit) | Mass of a Globular Cluster | Required for initialization and every subsequent time-step. |

| Earth (Low Res – Neutrino Bound) | 1072 Watts | Far exceeds the power output of all stars in the observable universe. |

A 2025 study concluded that if the simulator’s universe shares our physical laws, they simply could not afford the “power bill” to run our universe. This suggests that if we are in a simulation, the “parent” universe must have fundamentally different (and more abundant) energy or information-carrying capacity than our own.

The Conflict with Relativity

Sabine Hossenfelder, a theoretical physicist, argues that the simulation hypothesis is “pseudoscience” because it often relies on the assumption that space-time is discretized into a grid or lattice. Any such lattice structure would violate the principle of “Lorentz Covariance,” which is the foundation of Einstein’s Special Relativity. In a grid-based universe, light would travel at slightly different speeds in different directions relative to the grid axes. To date, no experiment has ever detected such anisotropy, making the “grid-based simulation” model inconsistent with the most well-tested theories in physics.

Potential Tests for a Simulated Reality

Despite these critiques, researchers have proposed experimental methods to search for “glitches” or signatures that might reveal the underlying code.

Anisotropy in Cosmic Rays

The most famous proposed test comes from Silas Beane, Zohreh Davoudi, and Martin Savage. They hypothesized that if the universe is simulated on a “Wilson fermion” lattice (a common tool in Lattice QCD), the highest-energy cosmic rays would exhibit a degree of rotational symmetry breaking.

The lattice acts like a diffraction grating for high-energy particles. By studying the distribution of arrival directions of these cosmic rays, scientists could look for a cubic symmetry that matches the simulator’s grid. While current data from high-energy astrophysical observatories have not yet found this signature, the precision of these measurements is expected to increase with the next generation of detectors.

Computational Impossibility Results

Using Rice’s theorem from computer science theory, some researchers have investigated whether it is even possible for a simulation to “know” itself. Rice’s theorem implies that any non-trivial property of a program’s behavior is undecidable. This might mean that a simulation could never contain a perfect simulation of itself (avoiding infinite regress) and that any evidence we find for the simulation could itself be a simulated “Easter egg” designed to mislead us.

Thematic Epilogue: The Convergence of Code and Consciousness

The simulation hypothesis ultimately serves as a profound metaphysical mirror, reflecting humanity’s technological state and its eternal quest for meaning. As a cosmological proposal, it challenges the divide between the material and the mental, suggesting that the “hard problem of consciousness” might be resolved by recognizing that both the brain and the mind are expressions of the same underlying information.

Whether one views the “Programmer” as an advanced civilization or a divine being, the hypothesis forces a reassessment of human agency and ethics. If our actions are part of a larger computation, do they lose value, or do they gain significance as data points in a grand experiment?. Proponents like Rizwan Virk suggest that this realization should lead to “curiosity and humility,” as we realize we are both players and avatars in a cosmic drama.

In the final analysis, the simulation hypothesis may never be “proven” in the traditional sense, as any proof could be part of the program itself. However, it provides a vital framework for the future of cosmology, encouraging physicists to look at the universe not just as a collection of matter, but as a structure of information. As Neil deGrasse Tyson suggests, we are “stardust brought to life,” and whether that stardust is biological or digital, the journey to understand the laws of the game remains the highest calling of the human mind.

Leave a comment